APRIL 12, 2022

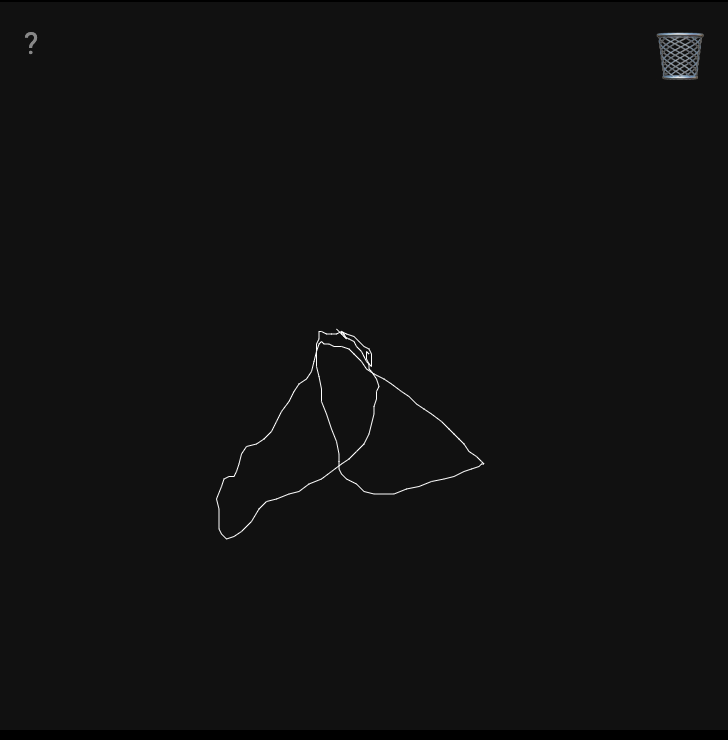

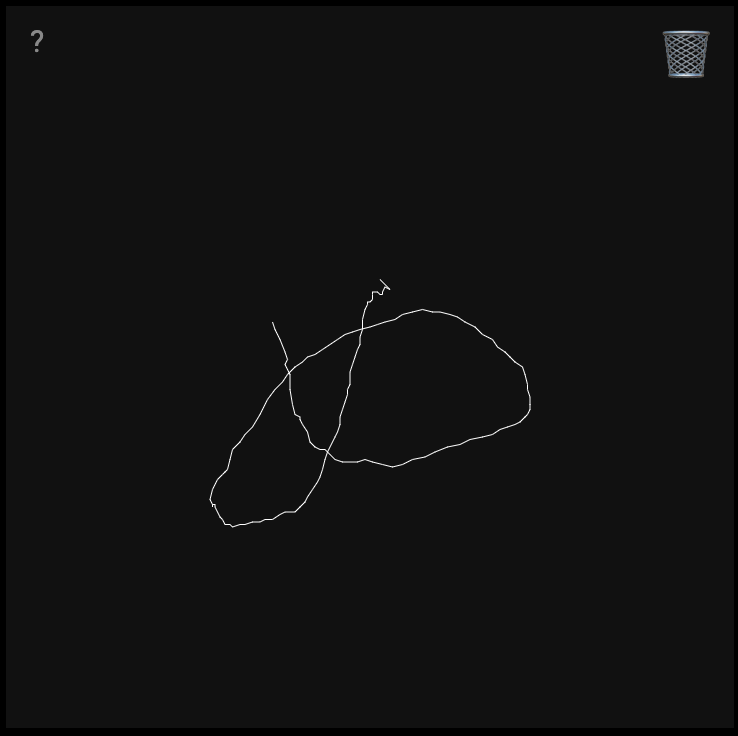

Two shapes having anxiety

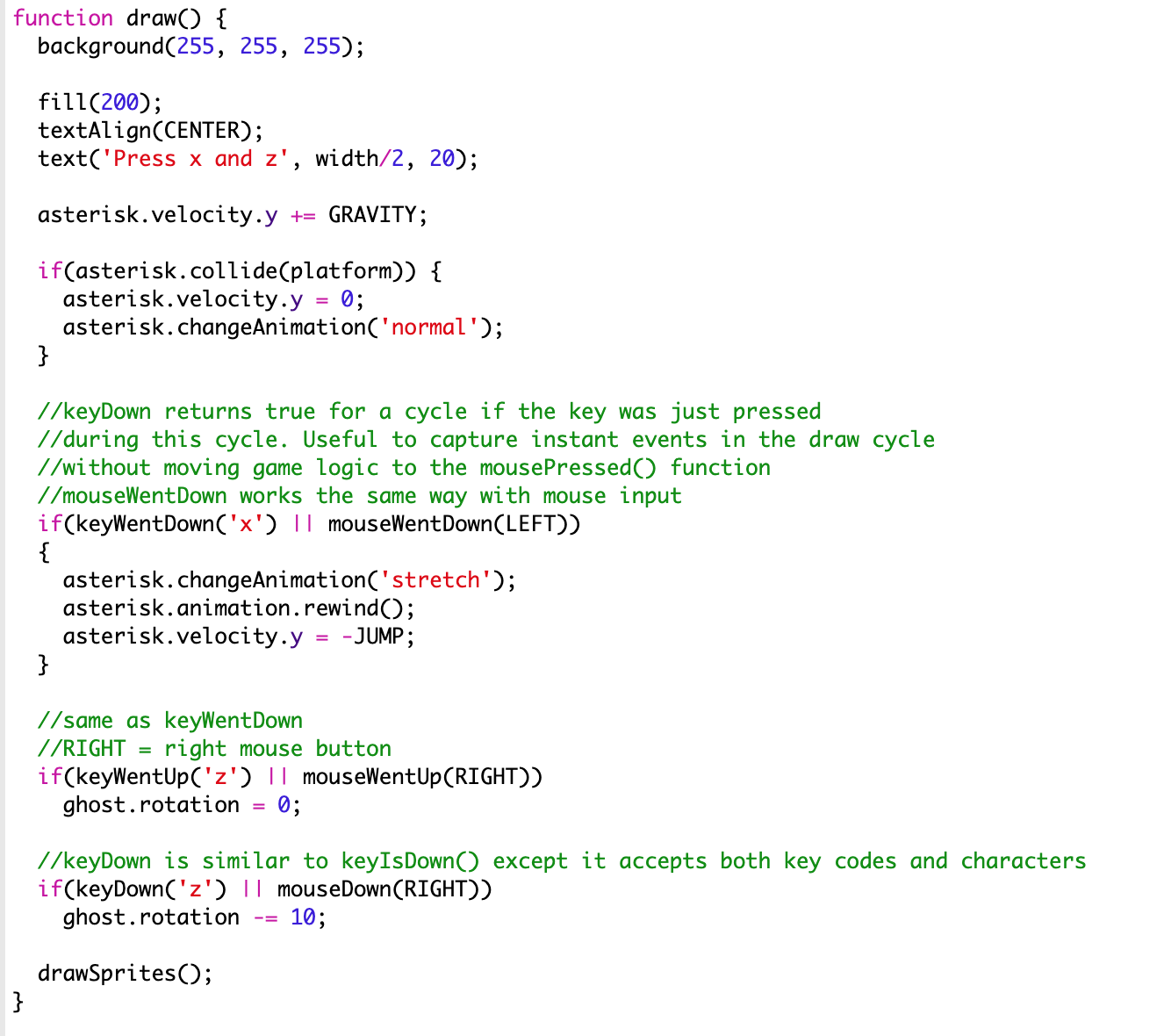

p5 libraries used: http://molleindustria.github.io/p5.play/

I decided to prototype a video gamesque controller that allows you to give anxious shapes more anxiety. Almost like a mating dance.

Motion Differentiation

What is a motion? When does the TinyML stop recording the gesture?

— what motions are too similar?

— what happns when one motion is a part of another motion?

— adjusting how fast should the training happen, when should it refresh to collect a new dataset for training

— getting motion to stop once the signal has been received once

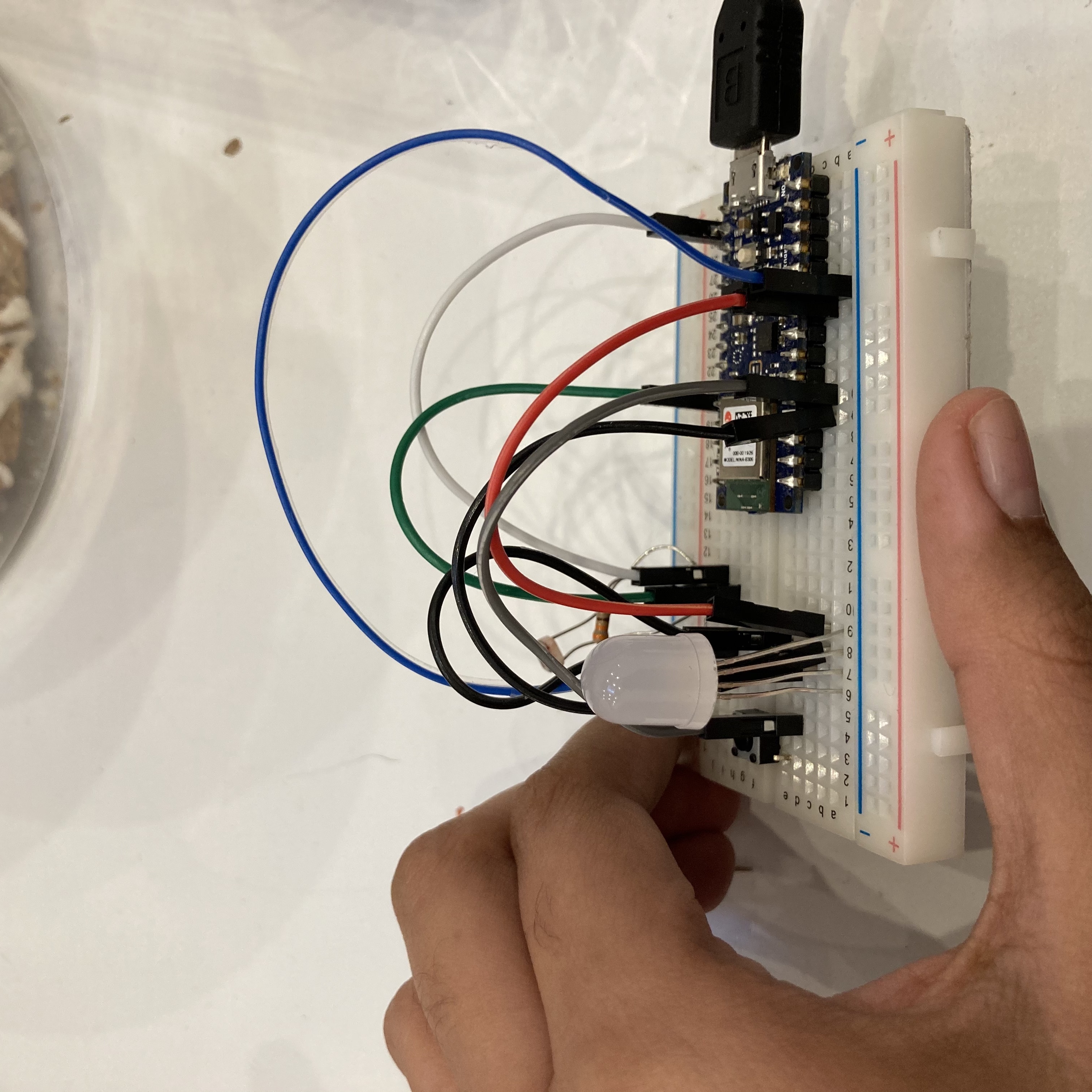

I trained with roughly 40 instances each. Still, the motion-paths aren’t distinguished enough from each other properly, so sometimes it behaves at random.

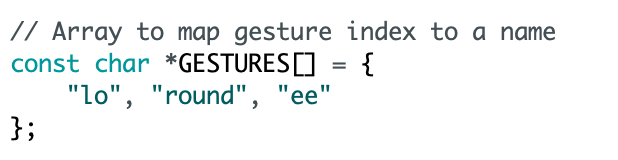

I was frustrated and randomly named the motions “lo” (forward motion), “round” (circular) and “ee” (diagonal) in classic train-wreck-naming-convention fashion.

Other qualms:

- It was tricky to get bluetooth to work at first. I finally felt in control of this project when the arduino light stopped blinking RGB and became a stable Blue.

This was after Scott turned my Bluetooth on/off, after trying other more complex ways to get to devices to pair.

Juggling a bunch of p5 libraries was also simply lovely, sometimes I was confused about the order in which the serial versus the draw stuff - should happen.

MARCH 29, 2022

Wekinator

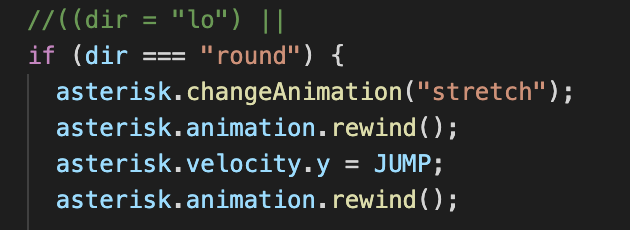

I did get this one to work:

(Scott’s Max Patch)

https://youtu.be/XpskMdiP1eA

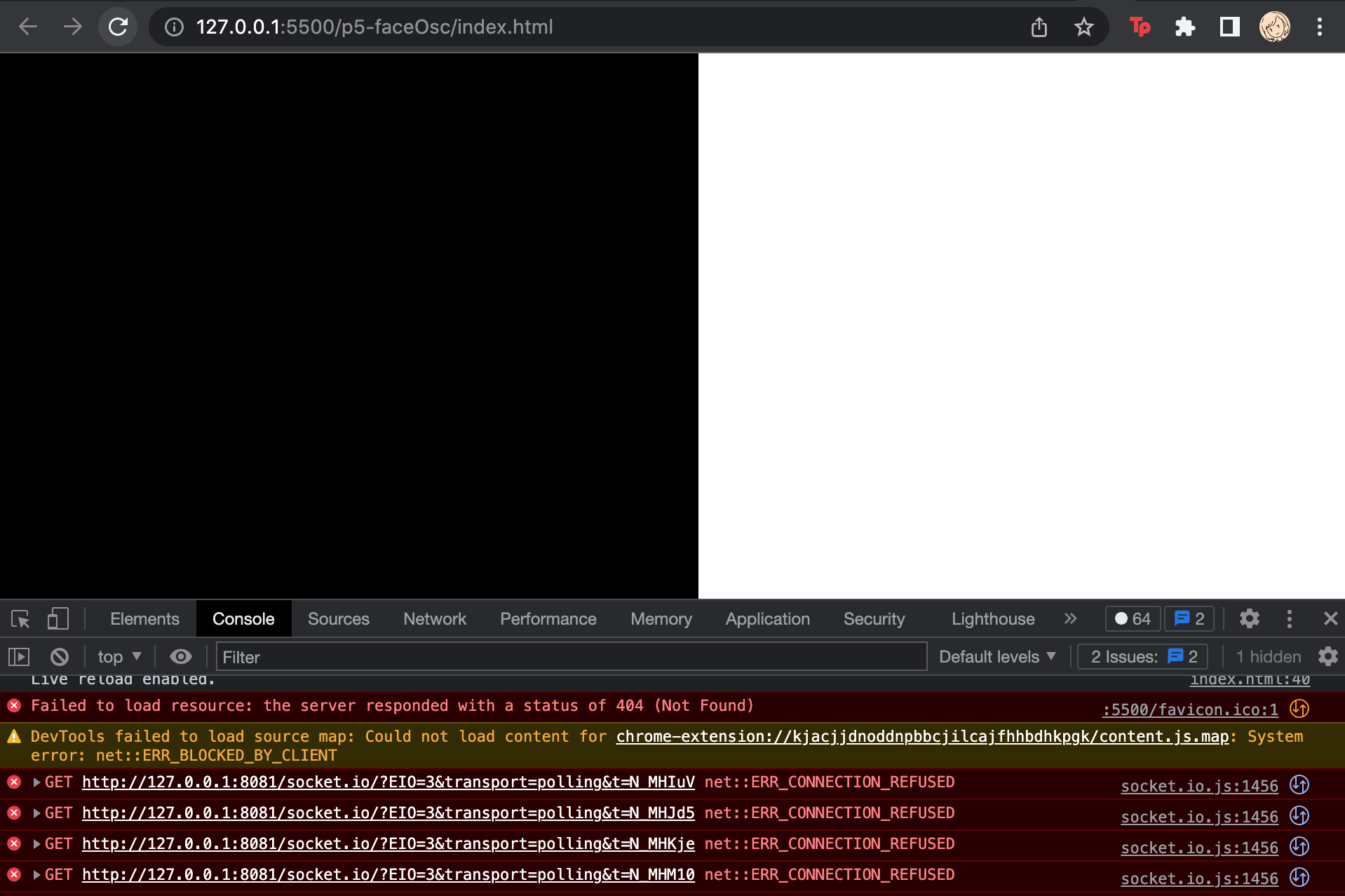

buuut the duck doesn’t really change. i can see the values changing but not sure where to view my output. The lolcalhost server won’t connect.

Finally got it to work! Turns you can’t simply open a patch with Max, copy pasting works better.

Fail #1

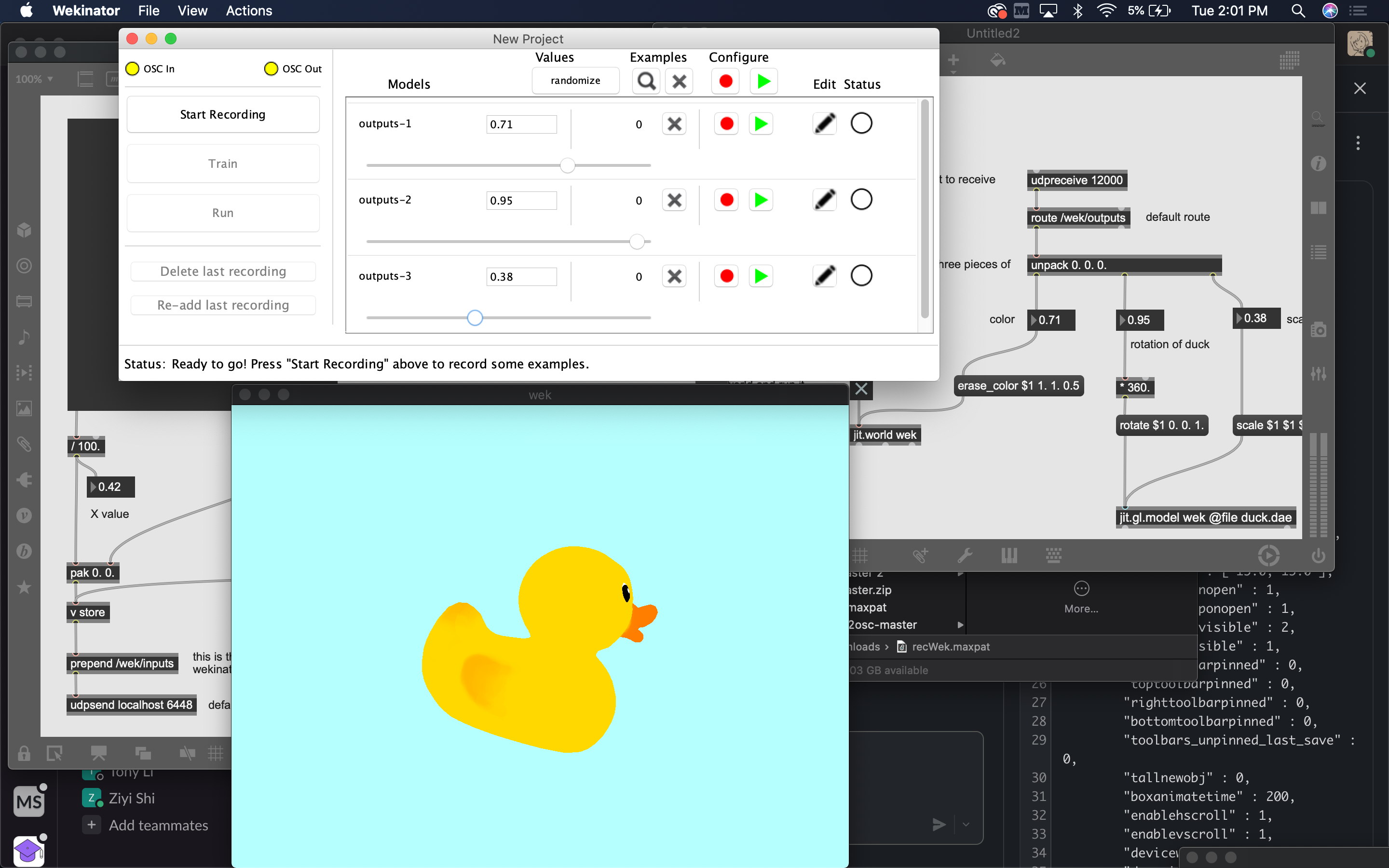

I used this word2vec plugn for Wekinator to give it input from the command line. This didn’t really work, I wasn’t sure where to observe the output. The local host server wouldn’t run.I will probably open an issue and see if I get a response.

Fail #2

I tried the p5 tutorials next but it also had some components that weren’t responding. Not sure something has depreciated. Also, the recommended input and putput ports were 3333/3334 respectively, but the local host URL from the local live server didn’t reflect that. Not sure if that matters.https://github.com/genekogan/p5js-osc/blob/master/Applications.md

fwiw, Gene in these videos is running his p5 code locally, it doesn’t seem to work on the web editor.

Fail #3

At this point I’m probably giving up too soon. I tried a max patch but couldn’t understand that input and output for audio. I should probably come back to this.

APRIL, 2022

Forager’s friend

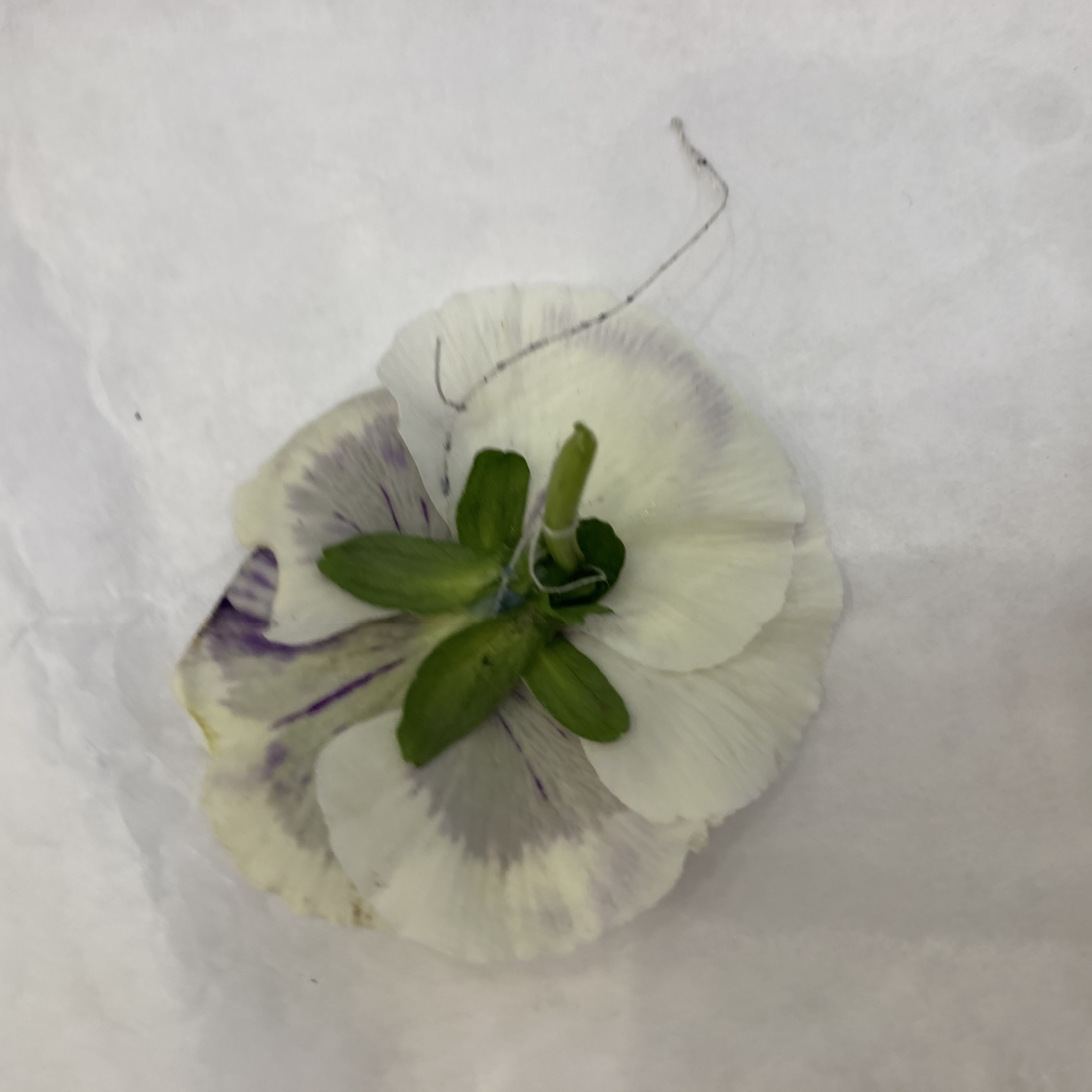

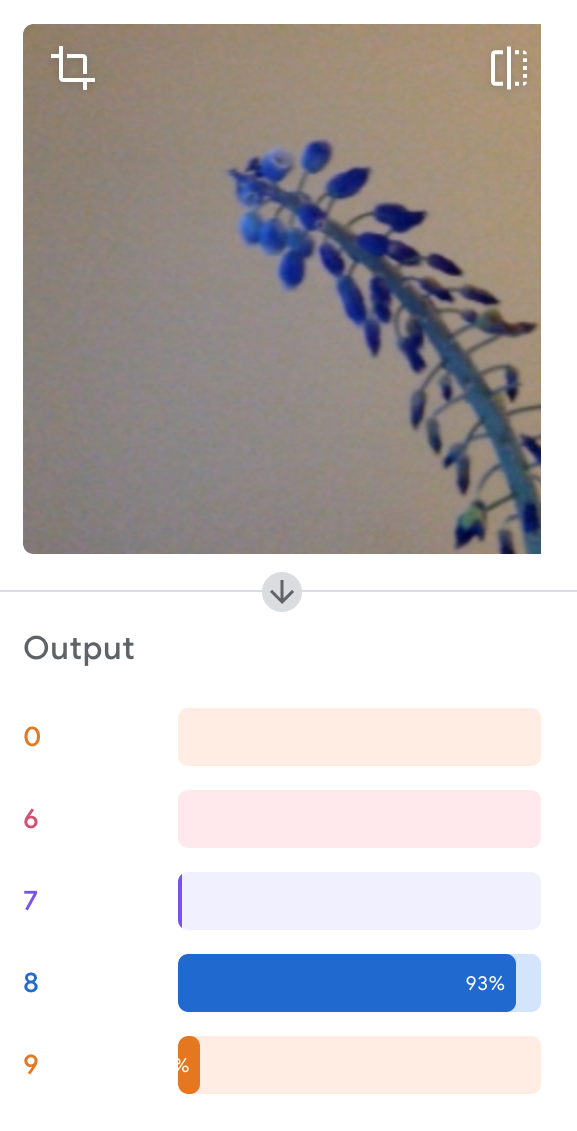

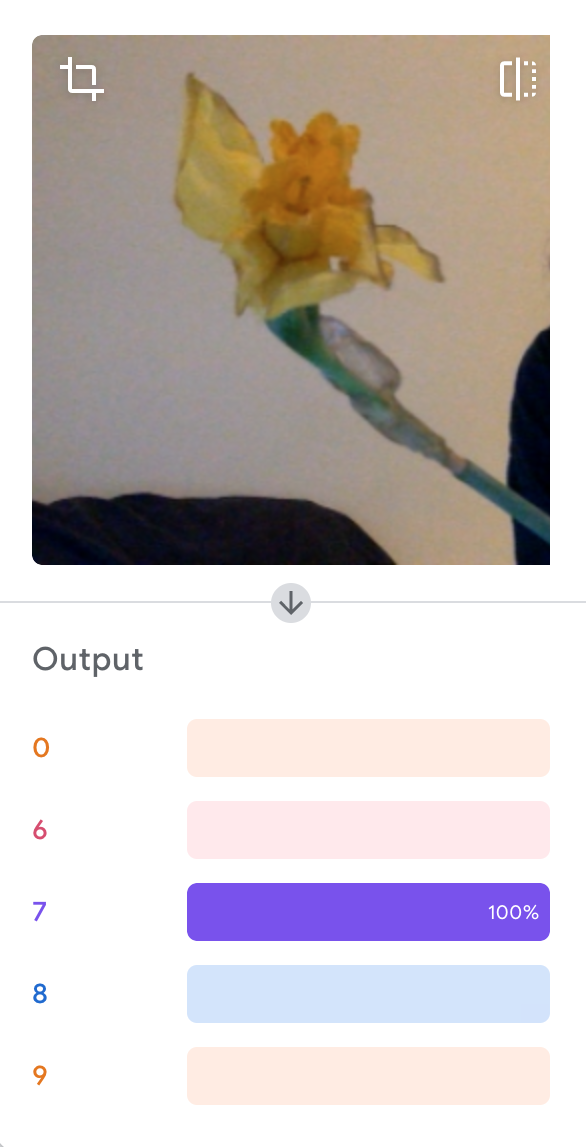

I trained mymodel on some early spring flowers, both edible and toxic. I captured the flowers from a variety of angles, and labelled them.

Fai

Teachable Machine Link: https://teachablemachine.withgoogle.com/models/6-sQeA4nl/

p5 sketch:https://editor.p5js.org/tanvi/sketches/IdR7OB02q

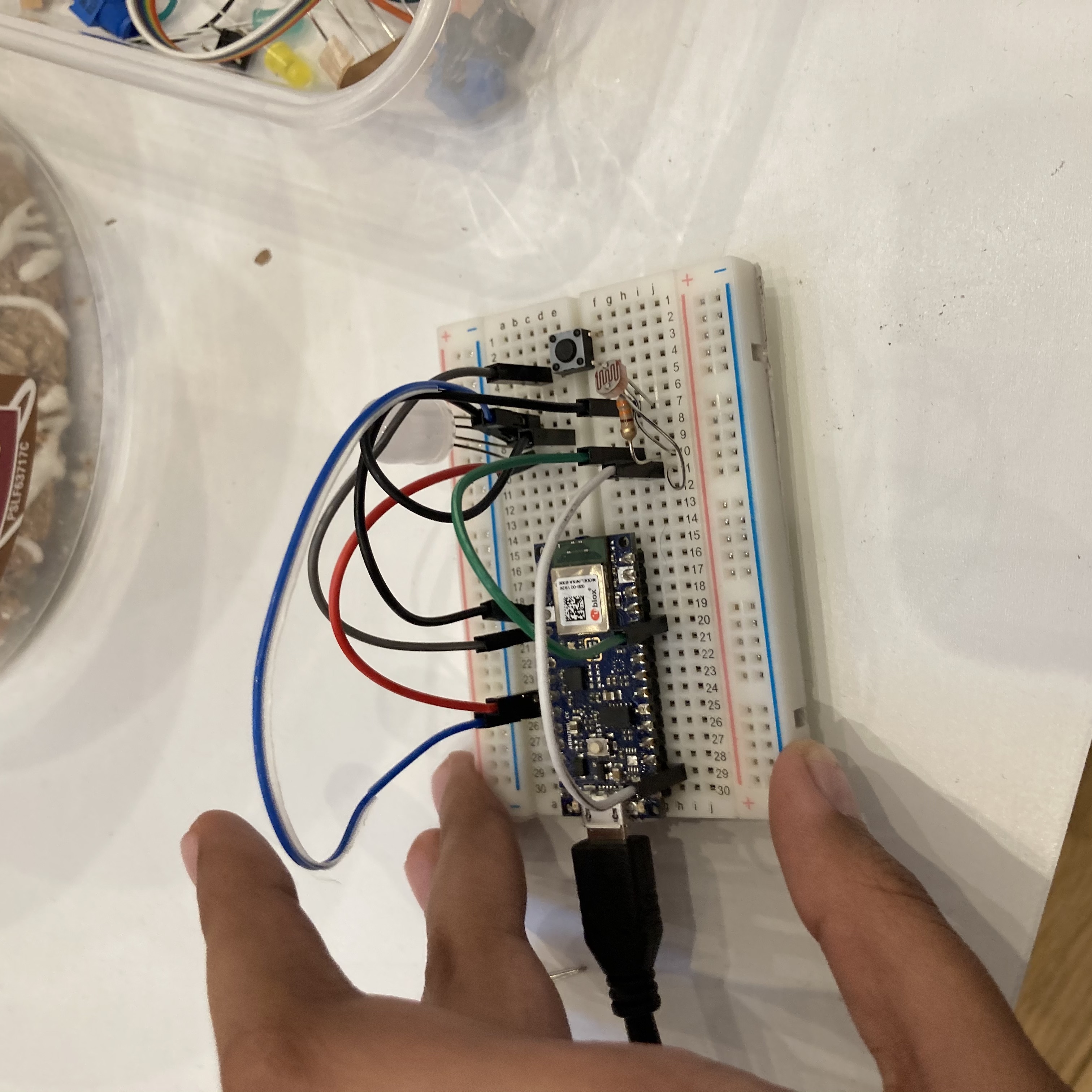

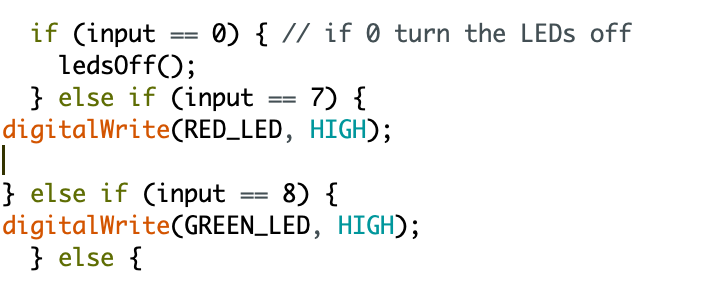

I then classified the 9 samples as toxic and non toxic and mapped them to green and red LEDs respectively.

![]()

in action :

APRIL, 2022

🥨 Pretzels et al.

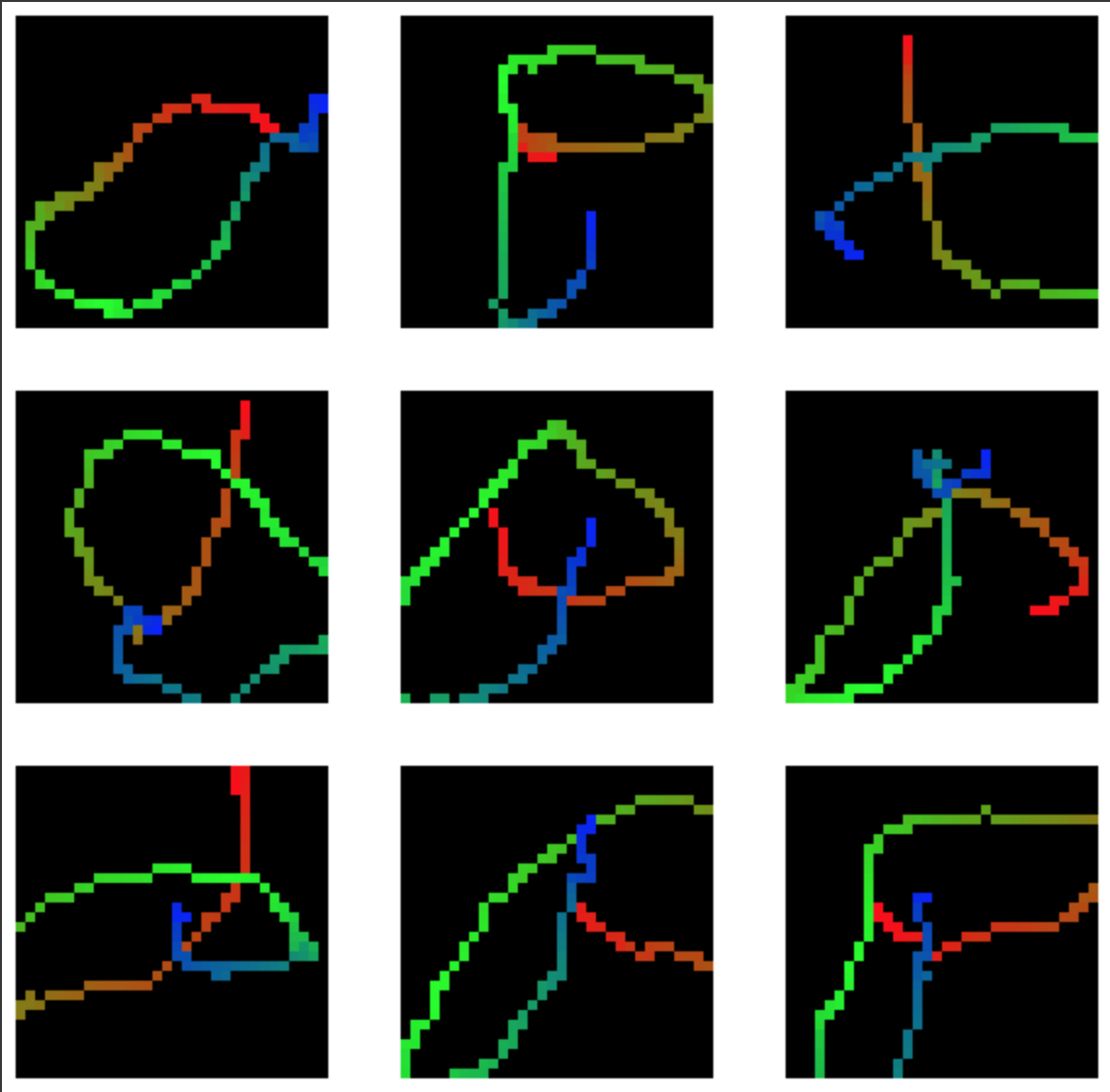

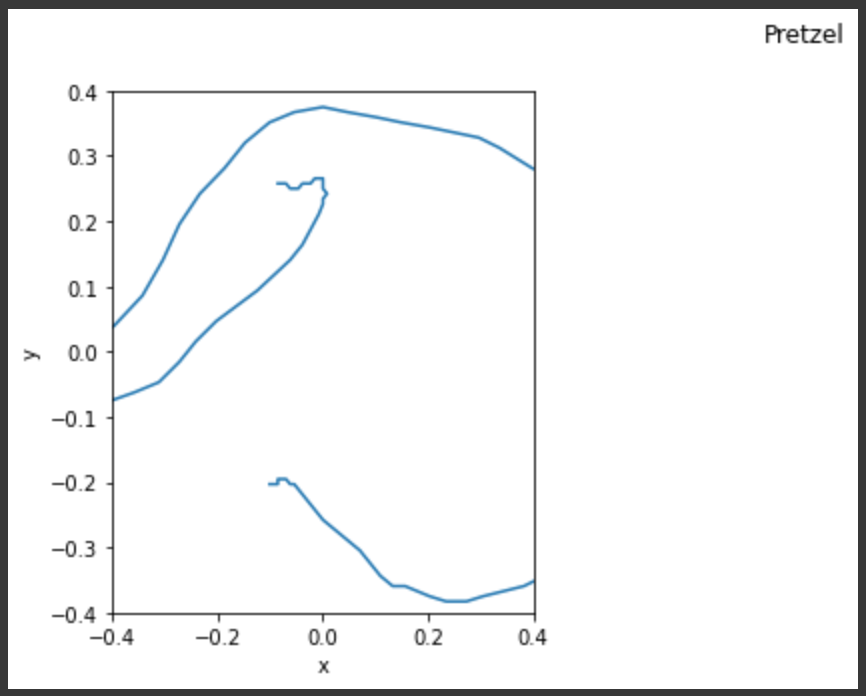

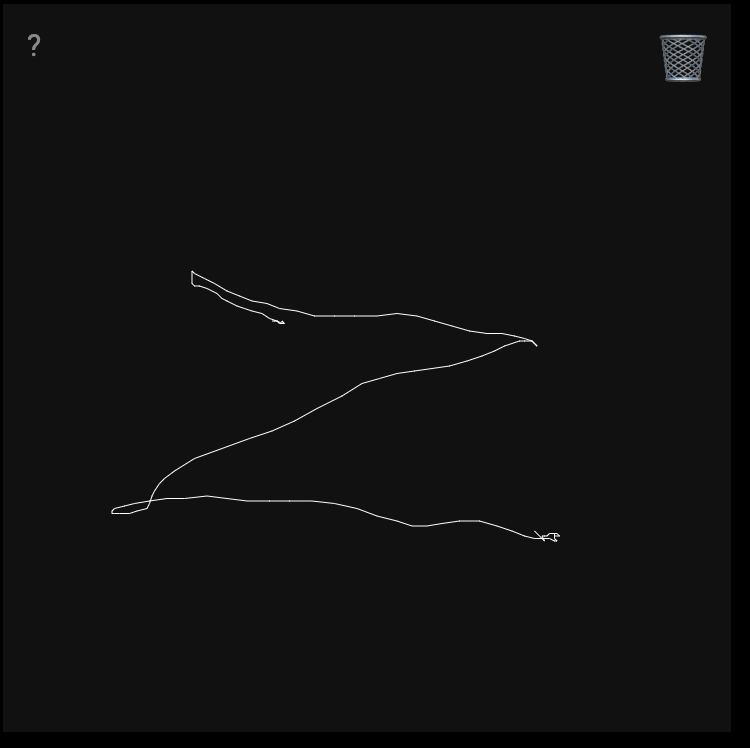

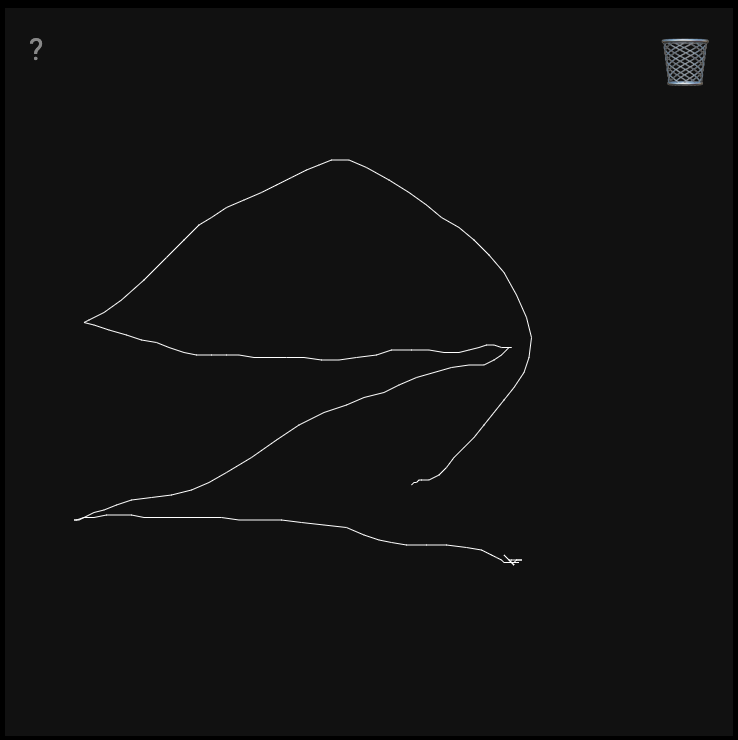

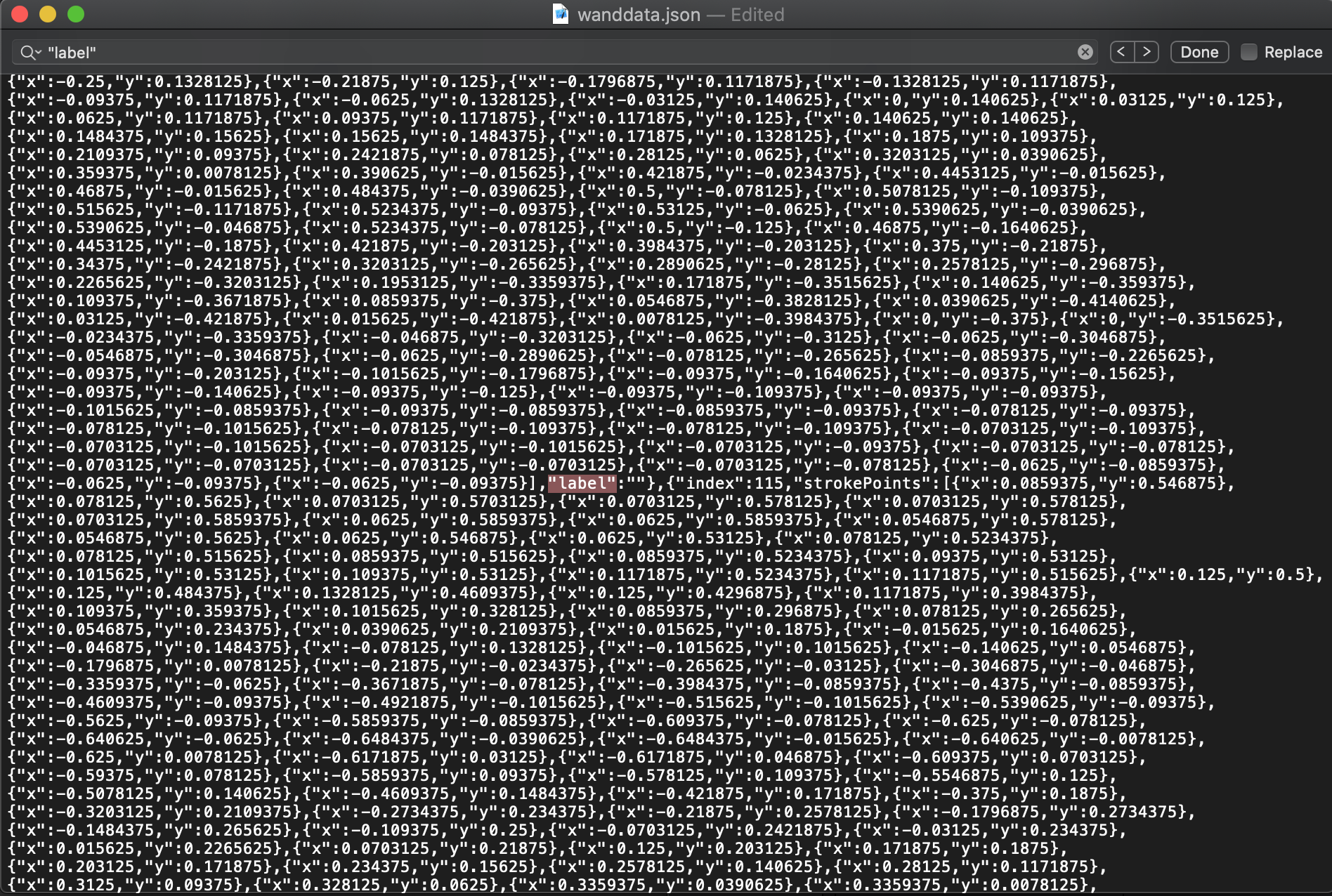

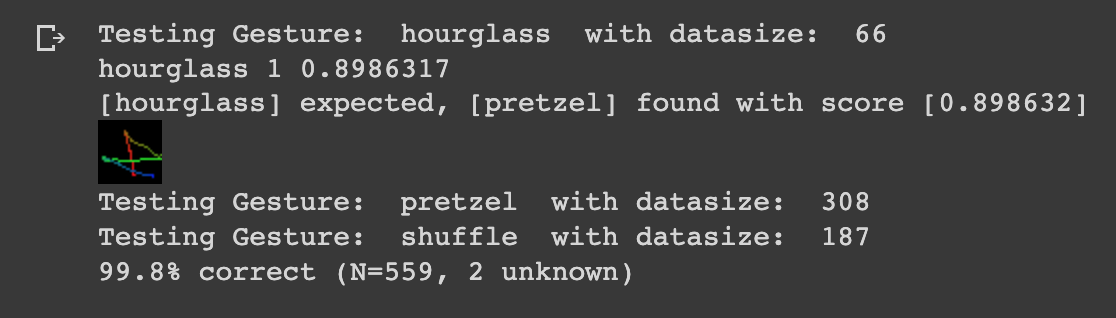

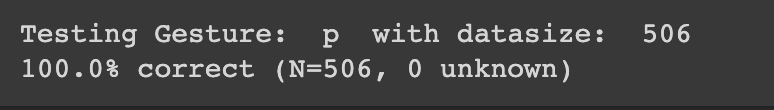

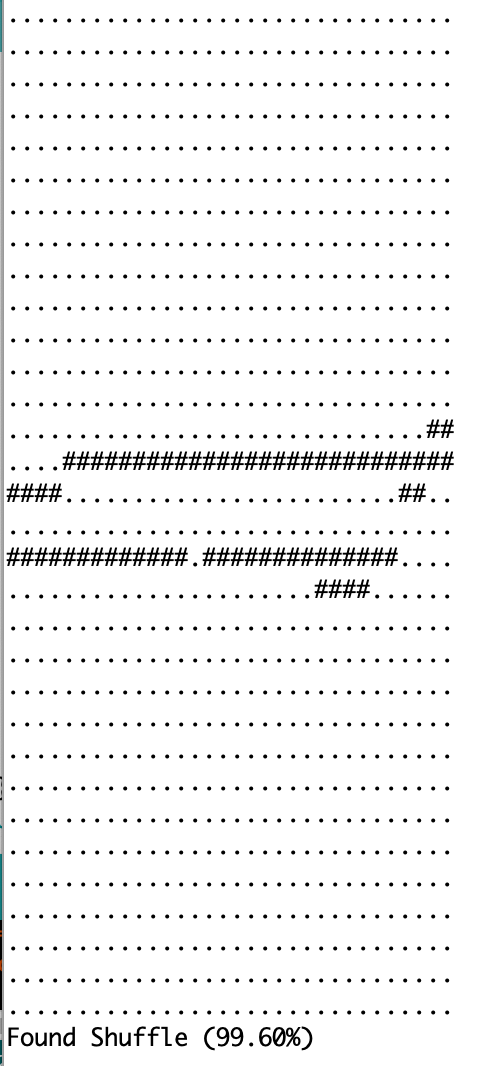

I trained my model on three shapes/gestures: Hourglass, Shuffle and Pretzel! Here is the documentation for pretzel, which was arguably the most complicated.

The ‘drawing tool’ is pretty low fidelity, something like a cat is identificable as a pretzel at best.

TIP: record more than you think you’ll need and clean. My sample set went from 130 -> 116 real quick.

she’s perfect, but she’s hard to get right.

Initially, I was trying to do a Z instead of the Hourglass but I realised that it kept capturing my motion to reset my drawing gesture to the starting point at the end of one sample. I realised that closed shapes with the same starting and ending point might work better with our capturing system.

Error 1: I had some labelling issues too. Not sure why these happen, went into the JSON to fix.

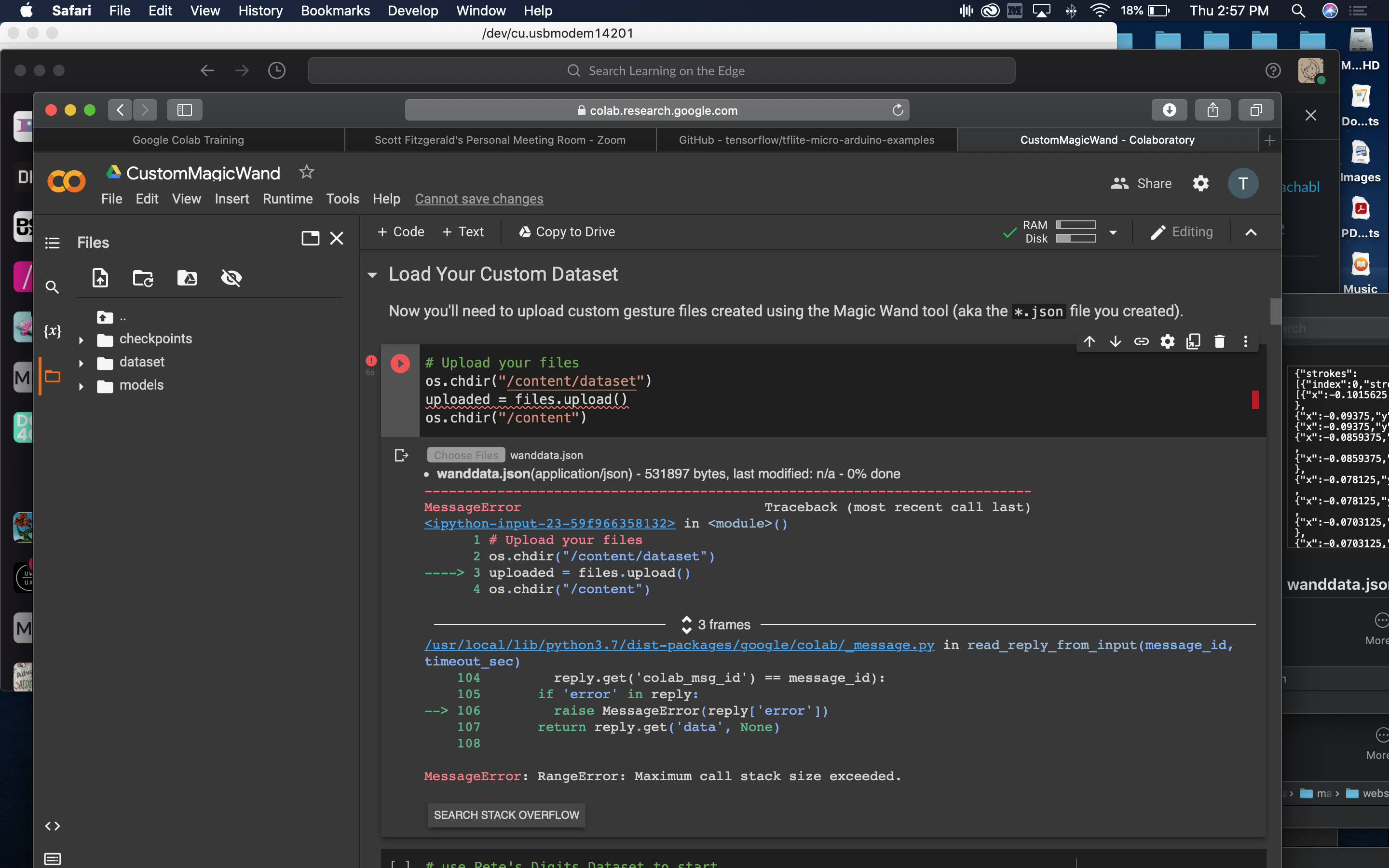

Error 2: This was caused by running the Colab notebook on Safari! 🚫

Training

APRIL, 2022

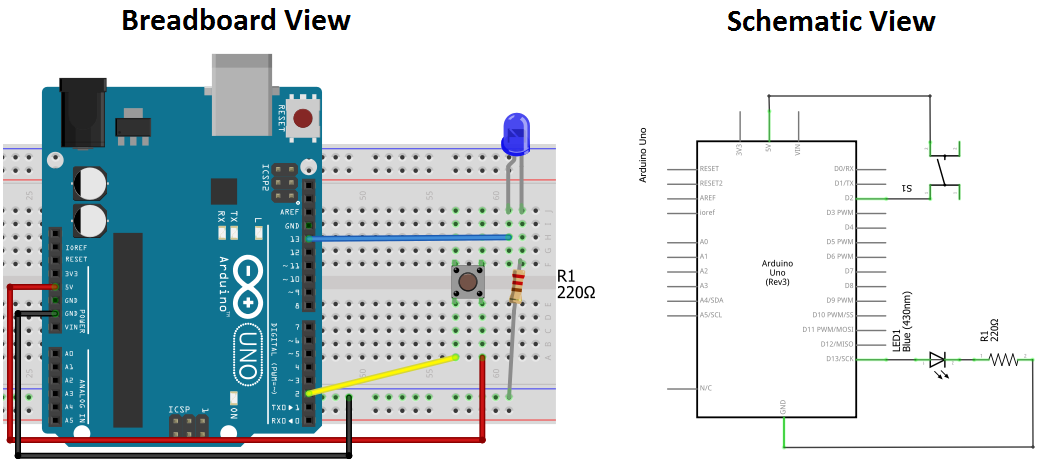

Color detector

I am still working on getting the circuit right ...

I’m not a 100% sure how to configure the button. I’ll consult friends to understand whats wrong with my circuit right now.

I looked at this diagram to understand how to photocells connect.

I’lll keep working on it.....